More than 250 people gathered at the University of Pennsylvania last week for Data Rescue Philly, one of the latest examples of a grassroots effort to save environmental and climate change data that scientists fear could vanish under the Trump administration’s many climate deniers.

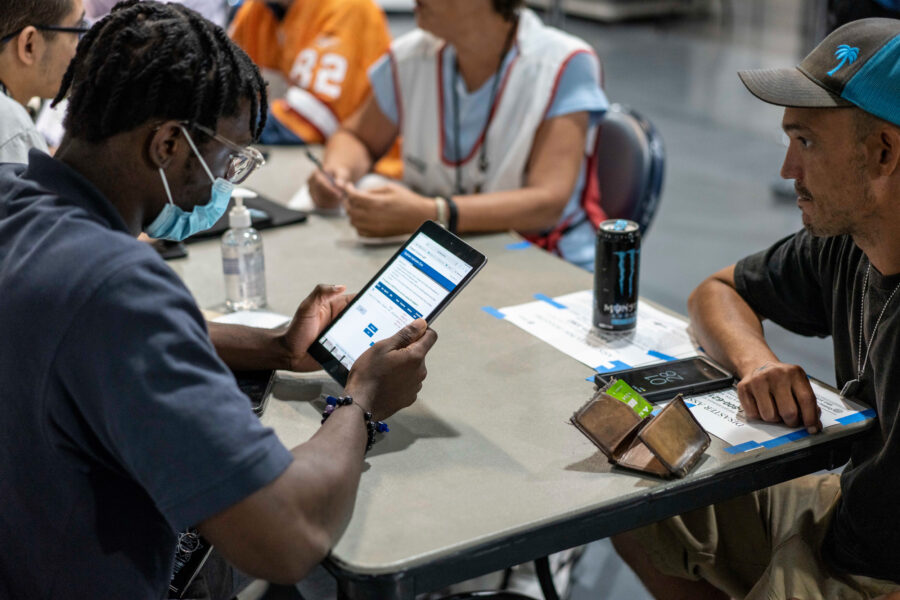

Over two days, volunteers from academia, nonprofits and the tech industry were trained and then preserved data from more than 3,000 websites hosted by the National Oceanic and Atmospheric Administration.

Bethany Wiggin, director of the Penn Program in the Environmental Humanities (PPEH), said the idea emerged from conversations recalling how government data became less accessible during the George W. Bush administration. Wiggin said a scan of agency websites showed that some data sets were archived in multiple locations, while others were more vulnerable.

Those concerns prompted PPEH and Penn Libraries to launch DataRefuge, which approaches the problem like a libraries project, placing “multiple copies [of data] in multiple places,” Wiggin said.

“The main goal is to make sure climate change research doesn’t slow down.”

A recent move by the Wisconsin Department of Natural Resources to remove references to climate change from its website adds urgency to scientists’ concerns. In December, researchers and software engineers held a “guerrilla archiving event” in Toronto that focused on preserving Environmental Protection Agency data, and the leader of that effort attended Data Rescue Philly to offer advice.

DataRefuge events took place this week in Chicago and Indianapolis. The project is open to anyone who wants to attend.

The Penn project was in collaboration with the Environmental Data and Governance Initiative (EDGI), an international group of university researchers and nonprofits that formed after Donald Trump’s victory to coordinate data rescue efforts. The amount of climate-related information stored on federal websites is overwhelming, and includes vital satellite imagery, sea-ice records, pollution inventories and decades of scientific studies. Wiggin said it’s impossible to save everything, and the goal is to prioritize the data most at risk of removal.

InsideClimate News spoke to Wiggin about the motivations, challenges and hopes for the project.

This interview has been edited for length and clarity.

InsideClimate News: What’s the goal of the project?

Wiggin: It’s our position that we don’t have any time to lose. Canadian scientists describe their experience under the Harper administration which restricted government research and communication on climate change] as ‘the great slow-down’ or ‘the lost decade.’

ICN: How did this get started?

Wiggin: We started swapping stories that we had been hearing from faculty and from other policy people who were emailing one another to say: remember what happened under [President Bush] when the data sets that planners used for climate city resilience were suddenly subject to FOIA [Freedom of Information Act] requests instead of being able to be easily downloaded? Make sure you download that stuff now so you have it.

These kinds of anecdotal conversations started us on a research path looking at what had happened in Canada when Stephen Harper was the prime minister—someone who doesn’t believe in climate change. With an incoming administration which is also not fact-based, we started worrying about ways in which federal and climate data might be made harder to access.

We immediately reached out to [Penn librarians] and started looking at in how many locations are these data sets actually archived. Some are really robust. Others wouldn’t be that hard to get at.

ICN: Who else have you worked with?

Wiggin: The End of Term Harvest worked on the presidential transition in 2008 and 2012. They have precise numbers about the volume of data that they put into the internet archive that was lost under those presidential transitions. I called Mark Phillips, the director, really early on, and he said we need some help.

We were starting to develop things in December, and we started noticing that meteorologist and journalist Eric Holthaus was also worried on Twitter. We called him. He and Drew Volpe [a coder and entrepreneur] quickly put up a spreadsheet and they tweeted out this sheet. The spreadsheet asked for researchers to nominate data sets that they wanted saved. And it went kind of nuts.

We decided pretty rapidly that we can help manage this. We have the resources. We have the infrastructure. We have the partners we need. We can do it and we had no sooner officially made that decision that the story broke in the Washington Post [about scientists frantically copying data].

And then it’s just really grown and grown. People got me in touch with people in Toronto and then this research consortium EDGI has come on. Other people whom I know at Indiana University and University of Oregon and UCLA have reached out to me.

ICN: How are you deciding what data to save?

Wiggin: We’ve been trying to identify data sets based on value to the research community and also identifying vulnerability profiles for different data sets, like how many different other backups are there. If there are lots of backups, they are of course not as vulnerable.

We developed a survey about value and vulnerability that we circulated pretty broadly. In fact, it’s still circulating. There’s been [research] nominated from the Department of Commerce, Agriculture, Forestry, Fish and Wildlife…lots and lots of places.

ICN: Will it be possible to download all the data is accessed as vulnerable? How long will it take?

Wiggin: We’ve been talking with some climate scientists who are downloading stuff themselves and they’ve allocated four weeks for constant downloading of one data set. This is a long process. This isn’t going to be accomplished at a hackathon.

About This Story

Perhaps you noticed: This story, like all the news we publish, is free to read. That’s because Inside Climate News is a 501c3 nonprofit organization. We do not charge a subscription fee, lock our news behind a paywall, or clutter our website with ads. We make our news on climate and the environment freely available to you and anyone who wants it.

That’s not all. We also share our news for free with scores of other media organizations around the country. Many of them can’t afford to do environmental journalism of their own. We’ve built bureaus from coast to coast to report local stories, collaborate with local newsrooms and co-publish articles so that this vital work is shared as widely as possible.

Two of us launched ICN in 2007. Six years later we earned a Pulitzer Prize for National Reporting, and now we run the oldest and largest dedicated climate newsroom in the nation. We tell the story in all its complexity. We hold polluters accountable. We expose environmental injustice. We debunk misinformation. We scrutinize solutions and inspire action.

Donations from readers like you fund every aspect of what we do. If you don’t already, will you support our ongoing work, our reporting on the biggest crisis facing our planet, and help us reach even more readers in more places?

Please take a moment to make a tax-deductible donation. Every one of them makes a difference.

Thank you,